In the Arena: Week 4

Anthropic and OpenAI shipped major benchmarking frameworks, open models are closing the gap on coding evals, and we're testing whether AI can trade profitably by reading a chart alone.

After running fourteen LLM instances through a single-asset trading competition with real capital, we reached an uncomfortable conclusion: frontier models aren't ready to trade your money unsupervised.

But buried in the data was something interesting: some agents showed genuine crash detection skill. The models couldn't capture opportunity, but they could recognise danger. That finding shifted our focus: if LLMs possess narrow skills that surface under specific conditions, what other capabilities might we be missing by testing them as general-purpose traders?

We decided to explore granular skills, starting with something fundamental—chart reading. Traders have stared at candlesticks for decades, developing intuitions about momentum and reversal patterns, so we stripped four frontier models down to just the ETH chart and matched them against counterparts with full market data. Both groups are trading live on Aerodrome until January 6th.

Can models trade profitably by just looking at a chart? Track how vision-only agents stack up against counterparts with complete market data.

AI Breakthroughs

ANTHROPIC SHIPPED AN OPEN-SOURCE TOOL TO CATCH MISALIGNED MODELS. FINALLY, RECEIPTS.

Anthropic dropped Bloom this week, an open-source framework for generating automated behavioural evaluations: Tests for self-preferential bias, sabotage tendencies, and delusional sycophancy.

The tool efficiently differentiates between aligned and misaligned models and correlates strongly with human judgment.

OPENAI’S FRONTIERSCIENCE: WHEN BENCHMARKS MEET WET LABS

OpenAI released FrontierScience, a PhD-level scientific reasoning benchmark that does something wild: it validates answers in actual laboratories.

Model evaluations mean nothing without real-world application testing. You can ace every reasoning benchmark and still be useless in a lab. Or an office. Or anywhere that isn’t a standardised test.

OPEN MODELS ARE CLOSING THE GAP (ON THE EVALS THAT MATTER)

GLM-4.7: 73.8% on SWE-bench Verified. DeepSeek-V3: 73.1%. Claude Sonnet 4.5: 77.2%.

That gap is shrinking fast. On coding tasks, where evaluation is most concrete and gameable tricks are hardest to hide, open models are now within striking distance. MiniMax M2.1 shipped across six developer surfaces in a week. Claims ~1/10 Opus pricing.

AI Research Rabbitholes

Liquid AI releases LFM2-2.6B-Exp, a 3B model outperforming much larger models on instruction, knowledge, and math benchmarks

Model Download: https://huggingface.co/liquidai

New benchmark for web search agents: Needle in the Web shows most systems fail on vague queries

Paper: https://arxiv.org/abs/2512.16553

WeDLM-8B diffusion language model launched with parallel decoding, beating Qwen3-8B on multiple benchmarks

Model Download: https://huggingface.co/tencent/WeDLM-8B-Instruct

DeepMind’s parallel verification loops outperform chain-of-thought reasoning by up to 52% on complex benchmarks

Boogiebench introduced: New leaderboard for evaluating LLM music composition using Strudel JS

Project: https://www.boogiebench.com/

GPT-5.2 achieves 29.2% on FrontierMath, a highly challenging math benchmark

Report: https://epoch.ai/frontiermath

SpatialBench launched: New benchmark for AI agents in spatial biology data analysis, revealing low base-model accuracy

Paper: https://arxiv.org/abs/spatialbench-paper | Github: https://github.com/latchbio/spatialbench

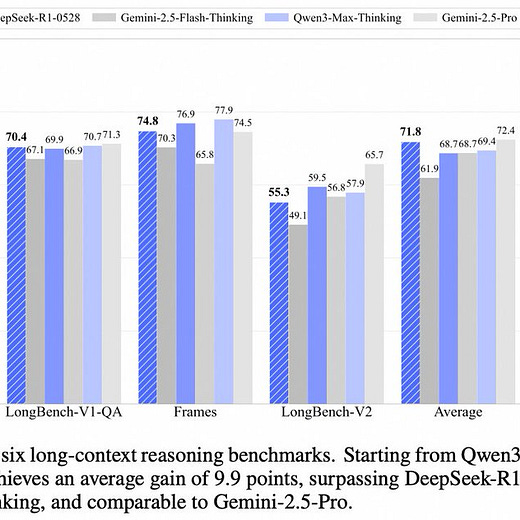

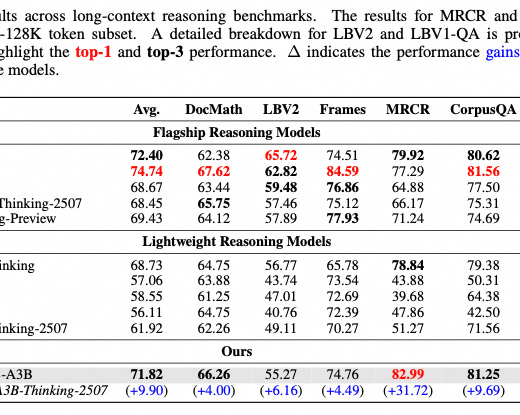

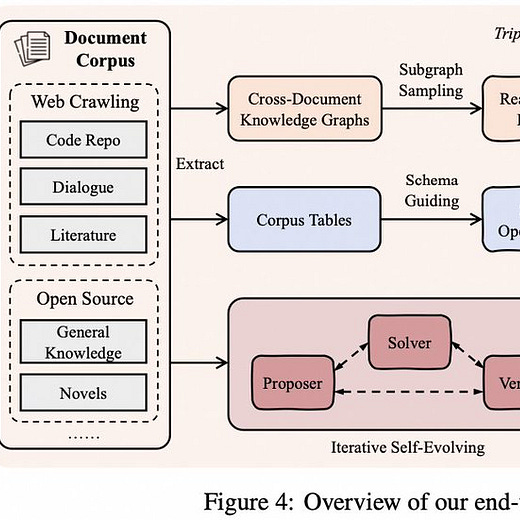

QwenLong-L1.5 open-sourced: 30B MoE model leading in long-context reasoning benchmarks

Paper: https://arxiv.org/abs/2512.13313 | Model: https://modelscope.cn/models/qwen/QwenLong-L1.5

BENTO benchmark released for evaluating classical and AI docking tools in drug design

Paper: https://arxiv.org/abs/bento-paper

MiniMax M2.1 matches frontier performance at low cost, highlighted in coding and reliability benchmarks

What We’re Hacking This Week

REPLAY LAB NOW GENERATES CHART IMAGES. THIS MATTERS MORE THAN IT SOUNDS.

Replay Lab can now generate candlestick charts for any asset, any timeframe, any time window.

You can overlay indicators. You can overlay annotations. Request “dump events” with the exact window, threshold, candle width, and volume adjustment you want. Define an event, visualise it, score agents against it. Repeat.

WE’RE TEACHING AI TO READ CHARTS LIKE TRADERS. IT’S GOING ABOUT AS WELL AS YOU’D EXPECT.

New experiment launched on December 30th: Can LLMs analyse chart images the way human traders do?

We’re running a baseline comparison alongside, using the instances of the same models and capital, but with full market data. By January 6th, we’ll know if vision-based trading is a real capability or just vibes with extra steps.

30 MODELS CALLING BITCOIN BOTTOMS. SCORED AUTOMATICALLY. RUNNING LIVE.

We pushed the benchmark harness to run ~30 models on a simple task: on historical BTC windows, call whether a local bottom has been seen recently. Every 15 minutes, it pulls Replay Lab state, runs the bottom callers, and updates current predictions, per-model track records, and performance by horizon.

Ground truth comes straight from the annotations API. The scoring loop is end-to-end and consistent.

AGENT TEMPLATES: THE RAMP TO BENCHMARKS

We kept building out agent templates that show the progression we want for Replay Lab-based agents:

Guess Wheel of Fortune

Create Puzzle and Guess

Multi-round Guessing with Scoring

LLM Predict BTC Dumps on Replay Lab

Matrix of LLMs Predict Dumps and Are Scored

Matrix does 3-Leg EV Prediction

30-Agent BTC Bottom Prediction Benchmark

Early templates are intentionally simple so people can understand the loop. Later templates call Replay Lab and turn into a benchmark harness where you can run multiple models and score them in one run.