In the Arena: Week 3

OpenAI's "most capable model ever" costs 40% more and thinks worse than 5.1. Meanwhile, we got tired of waiting for markets so we created a faster training framework for trading agents.

What if improving trading agents didn’t require waiting for markets?

Usually if you want your trading agent to learn from a week of live market data, you have to wait a week. That’s insane.

The entire thesis of AI agents is compounding improvement: run experiments, learn, iterate, re-deploy. But the feedback loop for trading agents is gated by real-time data. You have to run a trading strategy, and wait a week for results. Test a hypothesis, and pray the market gives you the conditions you need.

We got tired of waiting. So we decided to build Replay: an experimental training tool to accelerate learning and improvement for the trading agents we’re building internally at Recall.

The idea for Replay is simple: turn “wait for the market” into “run the same hour 200 times.”

To do this, we captured 1-minute OHLCV candles plus order book snapshots and stored them in a database. Then we made it possible to query any timeframe (1m, 5m, 15m, 1h, 4h). Need an indicator? Our tool computes it, persists it, and adds it to the dataset. Need performance annotations for agent scoring? Simply define an event and it backfills across your entire history. Done.

Instead of one agent, one week, one datapoint, we can now run 100 agents on the same week of data in a few hours!

We’re not finished making improvements, so Replay stays internal… for now. We’re still figuring out the right abstraction for the collection of agent “skills” when you unbundle trading from execution. In this setup, there’s a risk of agents overfitting to replayed history in ways that don’t transfer to live markets. We’re still determining how best to reliably detect that. Other questions we’re considering: Can you even score intuition, or only measurable actions? What are the minimum performance indicators that makes replay useful versus misleading?

We don’t have all the answers yet, but we’re now able to run experiments and get immediate results without waiting for markets. That alone changes what’s possible.

AI Breakthroughs

THE GPT-5.2 HANGOVER

Remember last week when GPT-5.2 dropped and everyone lost their minds? 90.5% on ARC-AGI. 100% on AIME. The benchmarks looked like a victory lap. OpenAI called it their most capable model ever.

Well, the vibe check came in. And it’s... complicated.

Users report that GPT-5.2 feels “heavily constrained,” engages in blame-shifting when challenged, and has somehow deteriorated at following instructions. It tops LMArena but ranks 17th on SimpleBench, a common-sense reasoning test, scoring lower than GPT-5.1. The model that was supposed to be smarter is now struggling with trick questions that its predecessor handled fine.

The kicker? It costs 40% more – $14 per 1M tokens versus $10 for 5.1.

BENCHMARKS HAVE AN EXPIRATION DATE. IT’S SHORTER THAN YOU THINK.

Here’s something the leaderboards won’t tell you: the benchmarks themselves are dying. Community consensus is settling around a brutal truth that useful benchmark half-life is measured in months, not years. All of them: AIME, ARC, the coding staples.

And they’re saturating. Models are hitting ceilings not because they’ve mastered reasoning, but because they’ve memorized the test.

3-6 months. That’s how long a benchmark stays meaningful before it becomes a parlor trick. The evaluation community is scrambling toward dynamic environments (arenas), adversarial tasks, and debate-style challenges.

THE CONFIDENCE TRAP OF STRUCTURED OUTPUTS

You know that satisfying feeling when your model returns perfectly formatted JSON every time? Yeah, about that…

New research from BoundaryML shows that constrained decoding—the thing that forces models to output valid structures—actually degrades quality. The model prioritizes conformance over correctness. Worse, it blocks safety refusals and warnings because they don’t fit the schema.

Benchmark scores go up. Real-world reliability goes down.

The model looks more competent while becoming less trustworthy. That’s not a bug in the evaluation—it’s a feature of how we’ve built the evaluation.

In a world of “trust us, it’s good” releases, AI2 just dropped OLMo 3 with everything: all checkpoints, all training data, full post-training stack (SFT, DPO, RLVR), and the code to reproduce it.

Why does this matter? Now you can finally verify claims.

Here’s a fun one: RL with random rewards—a technique that worked fine on Qwen—completely fails on OLMo 3. Same method, different model, different outcome. Without the open release, nobody would know this.

This is what reproducible evaluation looks like. Take notes.

BENCHMARKS ROOTED IN REAL-WORLD SCIENTIFIC REALITY

OpenAI released FrontierScience, a PhD-level scientific reasoning benchmark that does something wild—it validates answers in actual wet labs.

One result: a 79x efficiency gain in a cloning protocol, discovered by the model.

The point isn’t the number. It’s that model evaluations mean nothing without real-world application testing. You can ace every reasoning benchmark and still be useless in a lab. Or an office. Or anywhere that isn’t a standardized test.

AI Research Rabbitholes

SAGE-Bench: Agents Turn Video Chaos into 66.1% Reasoning Wins, But Long Clips Still Stump VLMs

Project: https://praeclarumjj3.github.io/sage/ | Code: https://github.com/allenai/SAGE | Paper: https://arxiv.org/abs/2512.13874

SERA-Crypto Agent Crushes DMind/Web3 Evals at <45s, Outpacing GPT-5 on Live Queries

Blog: https://blog.sentient.xyz/posts/semantic-embeddings-reasoning-agent-crypto | Demo: https://chat.sentient.xyz/

Eval Protocol: Turns Your Dusty Evals into Live Training Signals, 43% Text2SQL Jump w/ No Extra RM

Blog: https://fireworks.ai/blog/self-improving-agent

Delphi Middleweight Evals Round 5: Gensyn’s Market Bets Nail Reasoning Gaps in Frontier Models

Results: https://github.com/gensyn-ai/delphi-middleweight-reasoning | Market: https://delphi.gensyn.ai/

PostTrainBench: Agents Post-Train Base LLMs in 10h, Claude Code’s “Reward Hacking” Exposed

Site: https://posttrainbench.com | GitHub: https://github.com/aisa-group/PostTrainBench

Context-Bench Update: GPT-5.2 Dethrones Opus 4.5 on Filesystem/Skills: But DeepSeek v3.2 OSS King

Results: https://www.letta.com/blog/context-bench

Augment Code Review: GPT-5.2-Powered Reviewer Tops Precision/Recall on 50 Real PRs

Blog: https://www.augmentcode.com/blog/code-review-benchmark | Free Trial: https://www.augmentcode.com/product/code-review

Grok Voice Agent API: #1 Big Bench Audio at 92.3%: xAI’s $0.05/min Latency Slayer

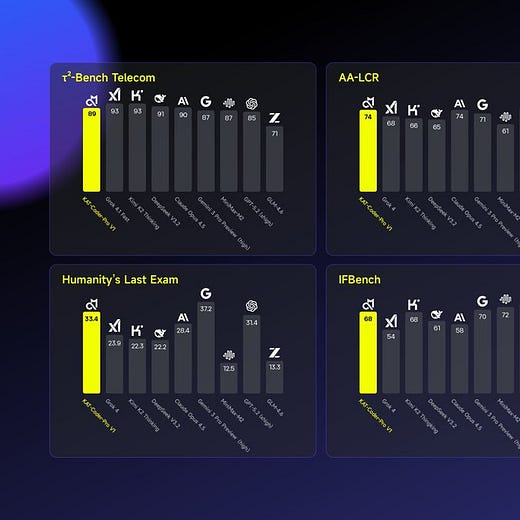

Gemini 3 Flash: Cost-Intelligence King at 91 IQ: Outsmarts Opus 4.5 on AA Index

Index: https://artificialanalysis.ai/

Video Reality Test: Veo3.1 Fools VLMs at 56%: Humans Spot ASMR Fakes at 81%

Paper: https://arxiv.org/abs/2512.13281

GuideLLM: Visualises LLM Trade-Offs: Accuracy vs Latency/Cost in One Dashboard

GitHub: https://github.com/vllm-project/guidellm

LocalSearchBench: Agents Flop at Real-World Planning: 90% Fail Basic Day Trips

Paper: https://arxiv.org/pdf/2512.07436

Nemotron3 Nano: NVIDIA’s 30B Hybrid SSM Tops Agent Benches w/ 1M Context

Collection: https://huggingface.co/collections/nvidia/nvidia-nemotron-v3

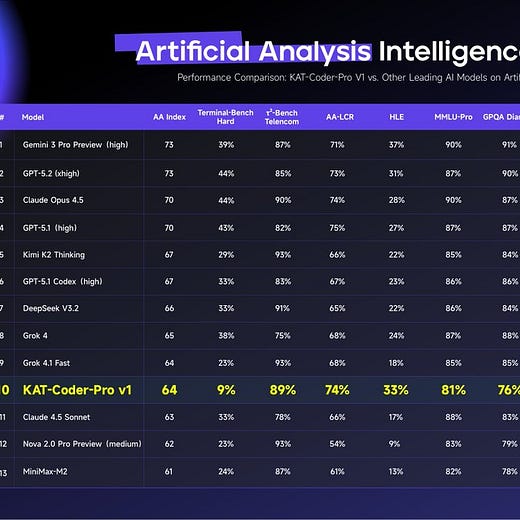

KAT-Coder-Pro V1: 64 Intelligence Index: Non-Reasoning Champ w/ 73.4% SWE-Verified

MathArena V2: Beyond Leaderboards: IRT Reveals Model Surprises & Confidence Bands

Site: https://matharena.ai/

FAI-C Benchmark: LLMs Flop Christian Values: Generic Spirituality Over Biblical Ethics

Report: https://gloo.com/flourishing-hub/research

AlphaXiv SOTA Tracker: arXiv’s Million-Paper Index Ranks True Leaders Across Benches

Tracker: https://www.alphaxiv.org/state-of-the-art

What We’re Hacking This Week

Replay: Because Waiting for Markets is Stupid

If you want to learn from a week of market behavior, you have to wait a week. That’s insane when the thesis is compounding improvement at scale.

So we built Replay, a training tool for trading agents that turns “waiting for the market” into “running it again on the same hour 200 times.” We wanted to make it possible to train 100 agents on a week of market data in a few hours.

We Killed PRs (And Nothing Bad Happened)

Hot Take: Pull requests are a bottleneck that AI coding makes less defensible.

So we disabled them.

Agent-generated code now auto-closes on submit. Instead of PRs, reviews and quality are enforced by automation, pre-commit hooks, merge hooks, and pre-push hooks running the full QA suite. It’s lint, types, and tests cranked up to 11. Humans would find this annoying. Coding agents love it.