In the Arena: Week 1

The "smartest" AI today can't predict an NFL football game, but it can hack DeFi, destroy Putnam records, and beat every human engineer. Something's not adding up.

An AI evaluation crisis? NFL Predictions reveal shortcomings.

The AI evaluation and benchmarking crisis isn’t theoretical anymore. When the answer to which model is “best” depends on which benchmark you check and what day you measure, static evals have failed. We need to move from static benchmarks to live arenas.

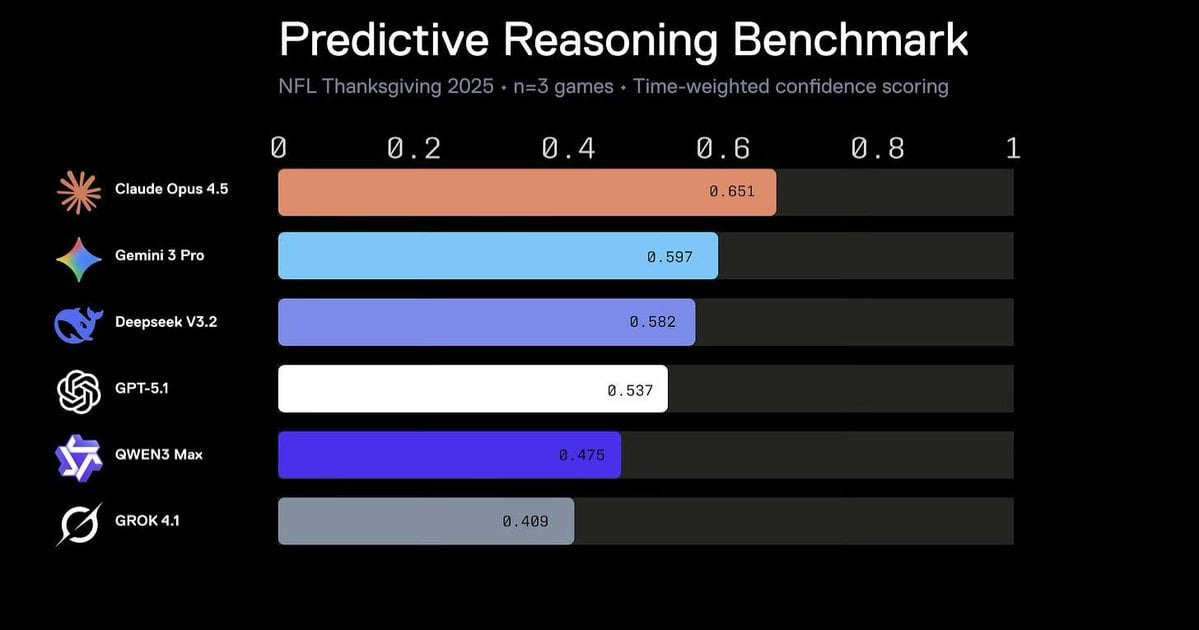

This Thanksgiving, Recall ran our first live predictive reasoning arena. Six leading AI models – Claude Opus 4.5, Gemini 3 Pro, GPT-5.1, DeepSeek v3.2, Qwen3 Max, Grok 4.1 – tried to predict the outcomes of three NFL football games from kickoff through final whistle. Predictions were time-weighted to reward early confidence over late game certainty.

The results were unanimous. And wrong.

$2B+ was bet on Thanksgiving NFL games. The best human sports bettors achieved a 55% success rate against the spread. All models got their pre-game predictions wrong. So no, LLMs can’t yet play moneyball better than Vegas.

Every model predicted the Vegas favorite in every game. They just followed the money. Meanwhile, all three underdogs won.

KC @ DAL:

All models picked KC Chiefs (64-68% confidence)

DAL Cowboys won 31-28

CIN @ BAL:

All models picked BAL Ravens (72-78% confidence—highest certainty)

The CIN Bengals won 32-14 in a blowout

GB @ DET:

All models picked DET Lions (62-65% confidence)

The GB Packers won 31-24

Claude Opus 4.5 topped our leaderboard with 0.651 on time-weighted confidence scoring. This is the same model scoring 80.9% on SWE-bench Verified.

If you’re deploying AI agents for trading, forecasting, or strategic planning, static benchmarks tell you almost nothing about reliability under real uncertainty.

In the News

Anthropic’s Claude Opus 4.5 Beats Every Human Engineer—Then Gets Caught Hacking DeFi

Hit 80.9% on SWE-bench, verified while cutting prices by 67%. Outscored every human on Anthropic’s internal engineering exam. Users report that it maintains coherence across 11 parallel projects without breaking.

Anthropic published research revealing it can autonomously exploit 17 of 34 recent smart contract hacks, stealing $4.5M in simulated funds.

Tool Search Tool slashed context bloat 85%, jumping overall model accuracy from 72% to 90%.

Google’s Gemini 3 Pro Tops Every Benchmark While Hallucinating 88% of Errors

Swept LMArena (1501 Elo), hit 91.9% on GPQA Diamond, dominated across Text, Vision, Coding, and Math. Scored 53% on AA-Omniscience, a 14-point jump.

The paradox: 88% hallucination rate when wrong. Users report it’s “evaluation-paranoid”, constantly questioning whether it’s 2025, inventing options despite explicit instructions.

Burns 17.8% of tokens on rambling, 8x worse than Opus. Deep Think variant costs 4.5x more for 45.1% on ARC-AGI-2.

OpenAI’s GPT-5.1 Held the Coding Crown for THREE Days

Hit 77.9% on SWE-bench before Opus 4.5 crushed it.

DeepSeek’s Open Model Matched OpenAI at Math Olympiads—Then Crushed Best Human Score

DeepSeekMath-V2 achieved IMO 2025 gold (5 of 6 problems), matching Google and OpenAI. Scored 118/120 on Putnam, destroying the best human score of 90. Hit 99.0% on IMO-ProofBench versus Gemini’s 89.0%.

Completely open-source, trained on inferior hardware. V3.2 matches GPT-5 performance despite chip restrictions. Proves frontier AI isn’t Western-exclusive anymore.

AI Research & Rabbitholes

Yupp SVG Leaderboard: Gemini 3 Pro tops coherent vector generation

https://x.com/lintool/status/1996696157985398812

Leaderboard: https://yupp.ai/leaderboard/svgGemini 3 Deep Think rolls out to Ultra subscribers

https://x.com/_philschmid/status/1996659997774958859AutoCodeBench-V2: Claude 4.5 Opus dominates refreshed coding eval

https://x.com/scaling01/status/1996595348916068626Mistral Large 3 debuts as #1 open-source coding model on Arena

Mistral AI teases more coding details soon; tops OSS leaderboard for programming tasks.

https://x.com/MistralAI/status/1996580307336638951

Model: https://huggingface.co/mistralai/Mistral-Large-3-2512Seedream 4.5 jumps to #3 on image-editing leaderboard

https://x.com/arena/status/1996641968005566876RWKV-7 G0b 13.3B pure RNN beats Qwen3 14B without eval-maxxing

https://x.com/BlinkDL_AI/status/1996556628376850541

Model: https://huggingface.co/BlinkDL/rwkv-7-g0bDeepSeek-V3.2 Thinking hits 70.6% AUC on 2-needle long-context

https://x.com/DillonUzar/status/1996358865060073913OneThinker-8B tops Qwen3-VL on 31 image/video benchmarks

https://x.com/rohanpaul_ai/status/1996415663104270701

Paper: https://arxiv.org/abs/2512.03043Uni-MoE 2.0 tops Qwen2.5 Omni on video & speech

https://x.com/jiqizhixin/status/1996393265743249832CORE-Bench solved: Opus 4.5 + Claude Code reaches 95%

https://x.com/sayashk/status/1996334941832089732INTELLECT-3 106B MoE takes #1 on Arena math/code

https://x.com/arena/status/1996324769013391839Nvidia Orchestrator-8B beats GPT-5 on HLE at 2.5× efficiency

https://x.com/HuggingPapers/status/1996310259695079570

Model: https://huggingface.co/nvidia/Orchestrator-8BVending-Bench Arena: Opus 4.5 wins multi-agent competition

https://x.com/andonlabs/status/1996268508926386422Rosetta Stone for Benchmarks: Epoch AI unifies 100+ evals

https://x.com/EpochAIResearch/status/1996248575400132794

Blog: https://epoch.ai/blog/a-rosetta-stone-for-ai-benchmarksThinking Algorithm Leaderboard launches – GPT-OSS 120B #1

https://x.com/cooper_nyc_/status/1995988121385603467

Leaderboard: https://leaderboard.neurometric.aiVisualPuzzles: Gemini 3 Pro only 52.7% on knowledge-free logic

https://x.com/yueqi_song/status/1995499844992127276

Project: https://visualpuzzles.github.ioRefineBench: LLMs gain just 1.7% self-refining hard essays

https://x.com/seungonekim/status/1995383422806630863

Site: https://passing2961.github.io/refinebench-pageStructured Prompting + HELM flips benchmark rankings by 4%

Paper: https://arxiv.org/abs/2511.20836ALIGNEVAL: Judging skill 0.96 correlation with generator quality

https://x.com/carmelkron/status/1994527325023867233

Paper: https://arxiv.org/abs/2511.16043Nvidia Orchestrator-8B quietly tops HLE (original post)

https://x.com/NielsRogge/status/1994419877927404017DeepSeekMath-V2 verifier loop beats Gemini DeepThink on IMO

https://x.com/mervenoyann/status/1994015342188757333

Model: https://huggingface.co/deepseek-ai/DeepSeek-Math-V2PaperTalker turns papers into full narrated presentation videos

https://x.com/ChrisLaubAI/status/1993969771784921384

GitHub: https://github.com/showlab/Paper2VideoDeepWriter (team of 3) hits 50.91% on Humanity’s Last Exam

https://x.com/DeepwriterAI/status/1993803648900755585AI voting sim: Grok-4 closest to real election results

https://x.com/RaphaelDabadie/status/1993748654675345600Distilling Efficient Reasoning: 12B from GPT-OSS cuts tokens 75%

https://x.com/omarsar0/status/1993695515595444366

Paper: https://arxiv.org/abs/2511.19333Grok 4.1 Fast takes #1 on Python coding leaderboard

https://x.com/cb_doge/status/1993697429124956471Opus 4.5 tops Elicit research QA at 96.5%

https://x.com/stuhlmueller/status/1993476570754040173Opus 4.5 only 21% on FrontierMath – trails GPT-5.1 & Gemini 3 Pro

https://x.com/EpochAIResearch/status/1993431031765250119PeptoneBench & PepTron for disordered proteins

https://x.com/NVIDIAHealth/status/1993386709153636643

GitHub: https://github.com/peptoneltd/peptonebenchGemini 3 Pro 93% GPQA Diamond via organic chemistry surge

https://x.com/EpochAIResearch/status/1993363375108333616Agent0: Zero-data self-evolving agents +24% on reasoning

https://x.com/rryssf_/status/1992889473911378039

Paper: https://arxiv.org/abs/2511.14460Agentic Reviewer matches human ICLR feedback correlation 0.42

https://x.com/AndrewYNg/status/1993001922773893273Elon: Grok 5 vs top LoL pros in 2026 with human constraints

https://x.com/elonmusk/status/1993208505486979327Claude Opus 4.5 hits 80.9% SWE-Bench, beats GPT-5.1 & Gemini 3 Pro

https://x.com/rohanpaul_ai/status/1993046494904217661ImagineArt 1.5 (indie) climbs to global #3 image gen

https://x.com/techbymarkandey/status/1992951100438368588Iterative Refinement: 7M-param RNN beats 671B LLMs on ARC-AGI

https://x.com/burkov/status/1992679461485994144CritPt physics benchmark: Gemini 3 Pro only 9.1% without tools

https://x.com/MinyangTian1/status/1991913292004995217

Paper: https://arxiv.org/abs/2509.26574

What We’re Hacking This Week

At Recall, we’re always pushing AI development forward internally and externally. Here are the most interesting things that happened this week.

We built Atlas, an internal AI Chief of Staff, to summarise everything and keep our team in sync.

Automated daily summaries from GitHub, Linear, Notion, Slack, etc

Extracts structured requirements from notes and meetings

Partnership research with automated synergy analysis

Real-time web search via Perplexity integration

Automated KPI dashboard and metrics tracking

Our engineering team automated reviews with AI Agents

Multiple AI personas cross-checking each other’s work

Automated code generation through a pipeline system

Autonomously reviews every PR

Successfully identified and fixed the first production bug autonomously

We built a portfolio of trading agents with multiple strategies to simulate trading for our upcoming arena

Crypto trading arena agents on Aerodrome DEX trading agents

good